YEAR Conference 2015: Your chance to win 5000 Euros for your Open Science project idea

Peter Kraker - February 27, 2015 in Announcements, Featured

Acknowledgements: Thanks to the YEAR Board for contributing to this blog post!

Are you a young researcher with an Open Science project idea? Here’s a chance to win 5000 Euros to make it happen: The Young European Associated Researchers (YEAR) Network organises its Annual Conference on 11-12 May 2015 at VTT in Helsinki/Espoo (Finland) with a focus on Open Science. Registration for the conference is now open.

The YEAR Annual Conference is a two-day event for young researchers, which offers a platform for exchange and training focused on key aspects of EU projects. This event provides young researchers with a solid basis for successful integrations of both open access and open research data concepts in Horizon 2020 projects as well as current research workflows.

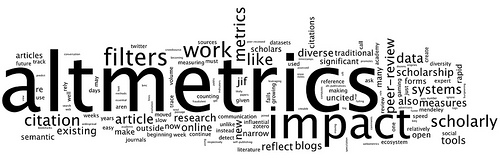

“Sharing is caring”! This is probably a good way to describe what Open Science really means: a new approach to science to share ideas, research results, research data, and publications with the rest of the world, through the newly available network technologies.

“Sharing is caring”! This is probably a good way to describe what Open Science really means: a new approach to science to share ideas, research results, research data, and publications with the rest of the world, through the newly available network technologies.

Open science approaches are rather new concepts that many researchers are not familiar with as of yet. Young researchers in particular struggle when being confronted with open access or open research data and issues related to it. This fact is reinforced by survey recently conducted by YEAR, according to which many of the surveyed young researchers are inexperienced with open science and unsure about its implications. According to a majority of about 80% of the survey participants one of the most effective channels for awareness-raising of Open Science is its integration in research training. The aim of this training is to respond to this demand and to provide young researchers with a solid basis for successfully implementing both open access and open research data concepts in H2020 projects and to highlight ways of integrating them into current research workflows.

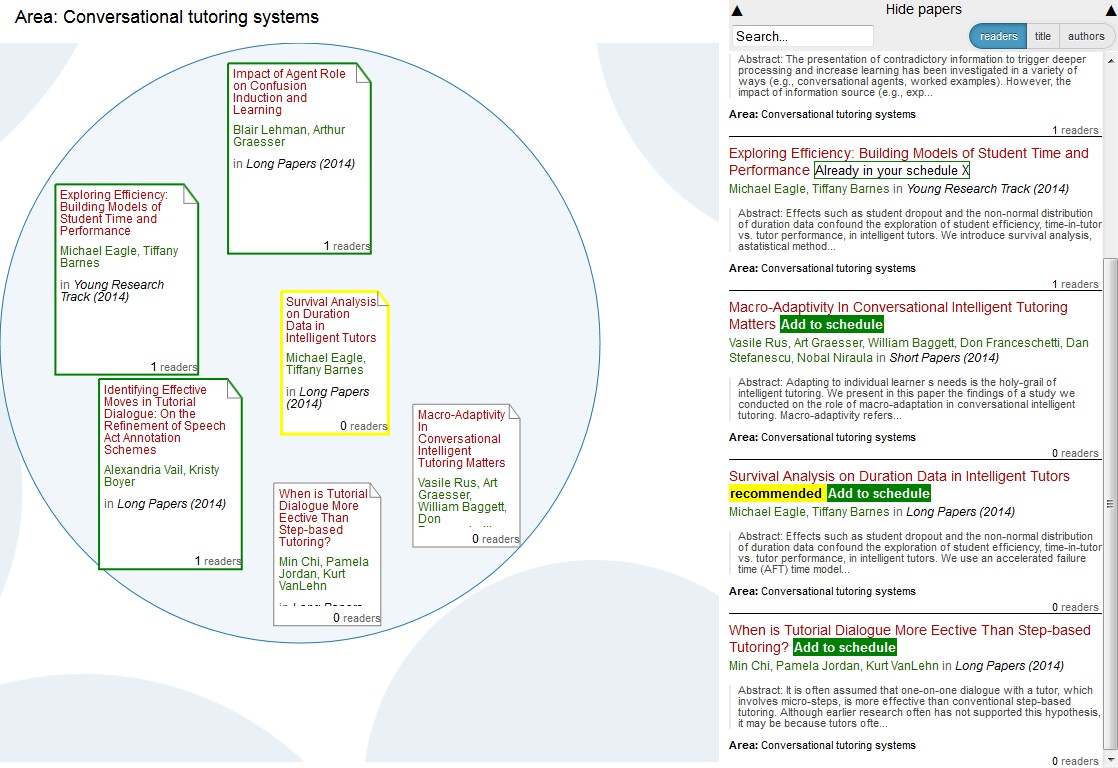

Conference Day 1: invited international experts will introduce strategies for fulfilling open access requirements in H2020 projects and Open Data Pilots. The goal of Day 1 is to give the attendees the necessary background information and useful tools for publishing open access or open research data.

Conference Day 2: the young researchers are invited to come with a project idea relying on, or promoting open research data/open science aspects. They will be challenged to defend their idea and to work it out with the other young researchers to take a chance to win one of the two YEAR Awards. The goal is for the young researchers to gain hands-on experience on developing strong project ideas as well as to find other potential project partners.

Confirmed speakers and trainers: Jean-Claude Burgelman (European Commission, DG Research and Innovation), Petr Knoth (The Open University, UK), Jenny Molloy (OKFN, University of Oxford, UK), Peter Kraker (KNOW Center, AT)

YEAR Awards: the two most outstanding project ideas defended and developed during the Conference Day 2 will be awarded. The YEAR Awards consist of a European Project Management training course and 5000 euros each to further develop the project ideas.

Please submit your project idea for the YEAR Annual Conference 2015 by Thursday 2 April 2015 Thursday 16 April 2015.

The conference is supported by the EU project FOSTER and is organised by YEAR in cooperation with VTT, AIT Austrian Institute of Technology, KNOW Center Graz, and SINTEF. The Open Knowledge Foundation is a dissemination partner.

Conference links: http://www.year-network.com/homepage/year-annual-conference-2015

https://www.fosteropenscience.eu/event/year-annual-conference-2015-open-science-horizon-2020